What Is an AI Image Detector

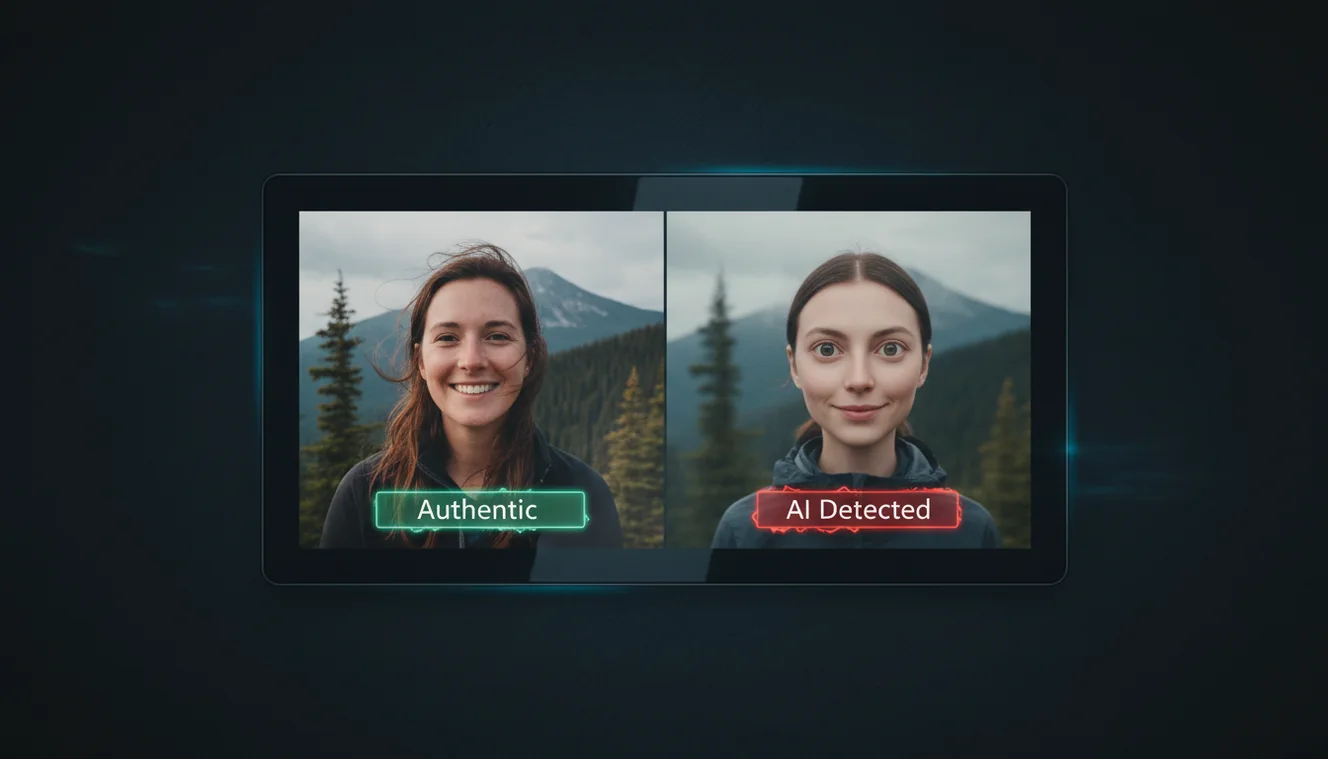

An AI image detector is a software tool that analyzes digital images to assess whether they were generated by artificial intelligence (e.g., Midjourney, DALL-E, Stable Diffusion) or captured by a camera. It examines visual artifacts, textures, lighting, and structural patterns that often distinguish AI-generated imagery from real photographs. The detector produces a probability or confidence score indicating how likely the image is to be AI-generated, along with specific observations about the analysis.

The core function is pattern recognition. AI image generators produce images through neural networks trained on millions of photographs and artworks. These models often introduce subtle or obvious artifacts: distorted hands, inconsistent text, unnatural reflections, or overly smooth surfaces. An AI image detector looks for these telltale signs. It may use machine learning models trained on datasets of both real and AI-generated images to classify new inputs. The detector does not "prove" authenticity; it provides an informed assessment based on observable features.

AI image detectors are distinct from text-based AI Detector tools. The AI Checker on our homepage analyzes written content to identify AI-generated text. An image detector focuses on visual content. Both address the same broader concern: distinguishing human-created content from machine-generated content in an era where AI tools are increasingly capable.

How AI Image Detection Works

AI image detection typically relies on convolutional neural networks or vision-language models trained to recognize patterns associated with AI generation. Common techniques include analyzing frequency distributions, texture consistency, and edge coherence. Real photographs often have natural noise, sensor artifacts, and lighting that follows physical laws. AI-generated images may exhibit statistical anomalies that trained models can detect.

Hands and fingers are a well-known weak point: many AI image generators produce extra digits, fused fingers, or anatomically incorrect shapes. Text within images is another: AI often renders letters with subtle distortions, missing parts, or nonsensical glyphs. Reflections and shadows may not align with the scene geometry. Lighting can appear uniform or inconsistent in ways that real cameras rarely produce. Detectors combine these signals into a composite assessment.

Some detectors are trained on specific AI model outputs. For example, a model trained on Midjourney v5 images may recognize that style's characteristic aesthetics. Others are more general-purpose, looking for broad patterns across multiple generators. The trade-off is specificity versus generality: specialized detectors can be more accurate for known sources but may miss novel or hybrid outputs.

When to Use an AI Image Detector

Journalists and fact-checkers use AI image detectors to verify whether images submitted as evidence or news are authentic. Social media and content platforms may use them to flag or label AI-generated content. Educators and researchers use them to assess the authenticity of submissions. Individuals use them to satisfy curiosity about images they encounter online or to verify images before sharing.

In academic or professional contexts, an image detector can be part of a larger verification workflow. It complements human judgment: a detector may flag an image for review, but a human should decide how to act. For high-stakes decisions—such as evidence in legal proceedings or academic integrity—multiple verification methods and expert review are recommended.

Limitations of AI Image Detectors

AI image detectors have significant limitations. They are not infallible. Newer AI models produce increasingly realistic output, and the gap between AI-generated and real images is narrowing. A detector trained on older data may struggle with the latest generators. Conversely, heavily edited real photographs—with filters, compositing, or compression—can trigger false positives.

Low-resolution or compressed images can reduce detection accuracy. Images that have been cropped, resized, or re-saved may lose or alter the artifacts that detectors rely on. Adversarial techniques—deliberately tweaking AI output to evade detection—exist and may become more common. No detector should be treated as a definitive arbiter of authenticity.

Ethical and privacy considerations apply. Analyzing images of people without consent raises privacy concerns. Using detectors to police or stigmatize AI-generated art without context can be problematic. The tool is best used as an aid to informed judgment, not as a replacement for critical thinking or human oversight.